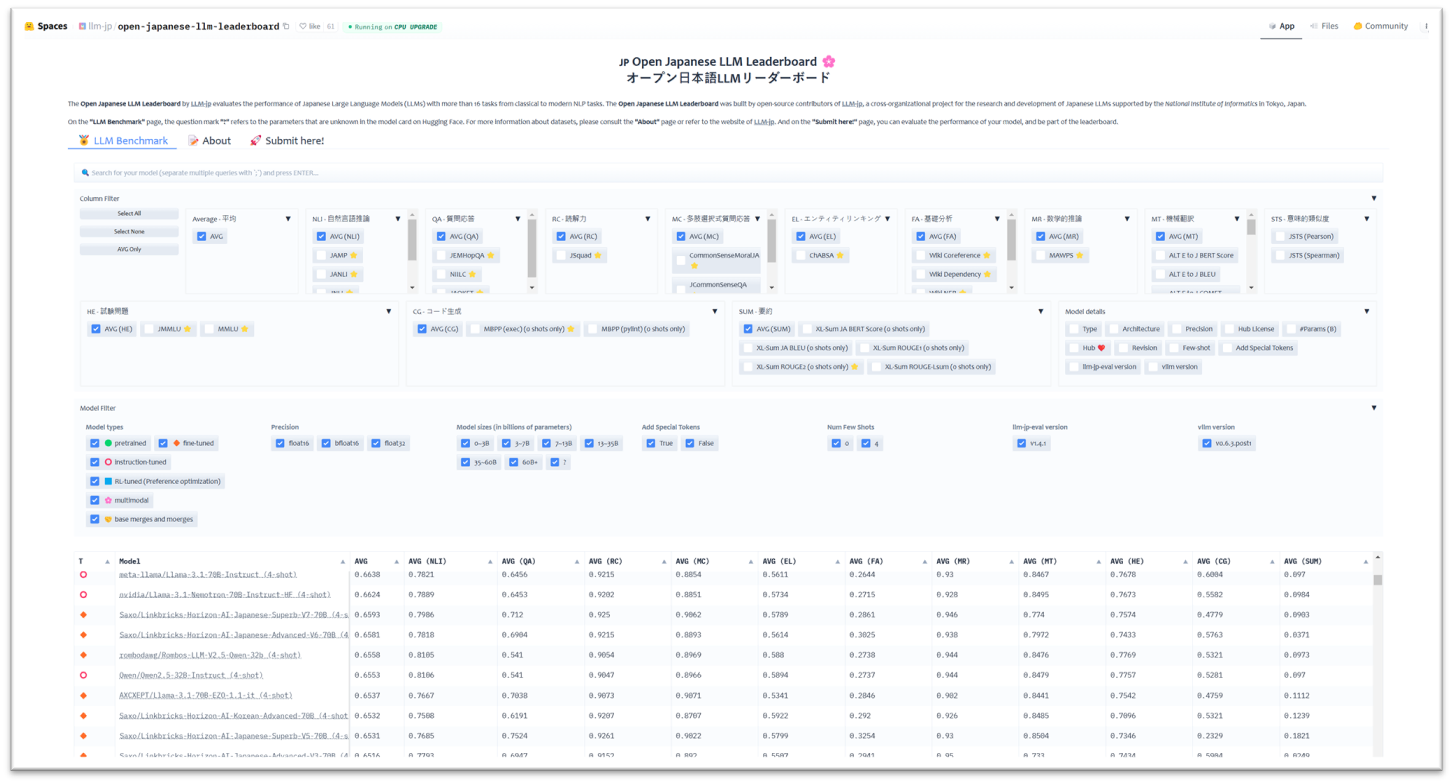

LLM-jp released the Open Japanese LLM Leaderboard, using a specialized evaluation suite, llm-jp-eval, to evaluate the performance of the Japanese Large Language Models (LLMs). The evaluation tasks cover a range of 16 tasks, such as Natural Language Inference, Machine Translation, Summarization, Question Answering, Code Generation or Mathematical Reasoning. Datasets have been compiled by the evaluation team of LLM-jp, supported by linguists, experts, and human annotators, or translated automatically to Japanese and adjusted to Japanese specificities.

The Open Japanese LLM Leaderboard shows that Japanese LLMs based on open-source architectures are closing the gap with closed source LLMs on general language processing tasks. Domain specific datasets are still challenging for LLMs, such as finance, linguistic annotations, code generation and summarization tasks. The evaluation tool will continue to be developed with new datasets and evaluation system.

LLM-jp, a cross-organizational project for the research and development of Japanese LLMs. LLM-jp aims to develop open-source Japanese LLMs. LLM-jp was launched by the National Institute of Informatics (NII) in May 2023 and in collaboration with Hugging Face. LLM-jp established six working groups (WGs): the Corpus Building WG, Model Building WG, Fine-tuning and Evaluation WG, Computational Infrastructure WG, Academic Domain WG and Safety WG. This project brings together more than 1,700 participants from universities and companies, and becomes the LLM Research and Development Center at the NII in April 2024, which will continue to promote research and development of LLMs in Japan.